“I’m completely operational and all my circuits are functioning normally.” — Hal 9000, “2001, A Space Odyssey”

We now have more information about the fatal crash of a self-driving Tesla Model X P100D electric vehicle in Mountain View, California last March, thanks to a preliminary accident report published by the US NTSB. Unfortunately, driver Walter Huang was killed in this crash.

First, here’s the situation taken verbatim from the NTSB report:

“As the vehicle approached the US-101/State Highway (SH-85) interchange, it was traveling in the second lane from the left, which was a high-occupancy-vehicle (HOV) lane for continued travel on US -101.

“According to performance data downloaded from the vehicle, the driver was using the advanced driver assistance features traffic-aware cruise control and autosteer lane-keeping assistance, which Tesla refers to as “autopilot.” As the Tesla approached the paved gore area dividing the main travel lanes of US-101 from the SH-85 exit ramp, it moved to the left and entered the gore area. The Tesla continued traveling through the gore area and struck a previously damaged crash attenuator at a speed of about 71 mph. The crash attenuator was located at the end of a concrete median barrier.”

(Note: According to the NTSB report, “A gore area is a triangular-shaped boundary created by white lines marking an area of pavement formed by the convergence or divergence of a mainline travel lane and an exit/entrance lane.”)

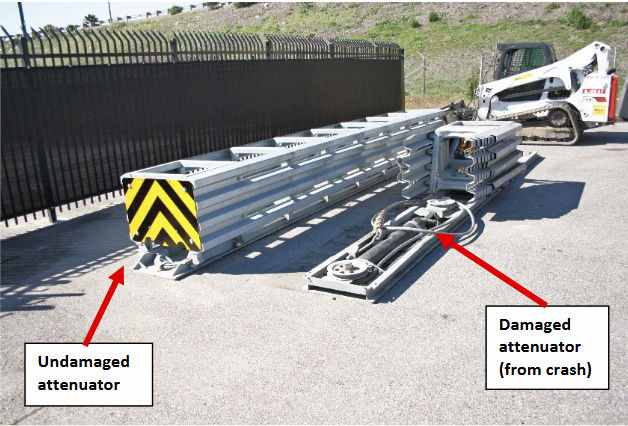

The crash attenuator had been previously damaged eleven days prior to the fatal Tesla crash, suggesting that this is a somewhat dangerous intersection. Here’s a photo of the damaged crash attenuator mentioned in the report alongside an intact specimen. Note how hard it is to distinguish the crash attenuator without the yellow and black end plate:

Note how the damaged crash attenuator presents a low-contrast obstacle while the intact attenuator presents a highly visible, high-contrast obstacle, as its design intends. I think it’s relatively easy for a human driver to see the damaged crash attenuator as a road hazard. I’m somewhat dubious that a visually trained neural network would make the same conclusion. I’ve personally driven this stretch of road in heavy traffic many times and I barely trust myself to drive it, much less the infant-stage technology designed into today’s self-driving cars.

Here’s a detailed chronology of the crash as recorded in the Tesla’s black box and directly quoted from the NTSB preliminary report (I’ve added bold face for emphasis):

- The Autopilot system was engaged on four separate occasions during the 32-minute trip, including a continuous operation for the last 18 minutes 55 seconds prior to the crash.

- During the 18-minute 55-second segment, the vehicle provided two visual alerts and one auditory alert for the driver to place his hands on the steering wheel. These alerts were made more than 15 minutes prior to the crash.

- During the 60 seconds prior to the crash, the driver’s hands were detected on the steering wheel on three separate occasions, for a total of 34 seconds; for the last 6 seconds prior to the crash, the vehicle did not detect the driver’s hands on the steering wheel.

- At 8 seconds prior to the crash, the Tesla was following a lead vehicle and was traveling at about 65 mph.

- At 7 seconds prior to the crash, the Tesla began a left steering movement while following a lead vehicle.

- At 4 seconds prior to the crash, the Tesla was no longer following a lead vehicle.

- At 3 seconds prior to the crash and up to the time of impact with the crash attenuator, the Tesla’s speed increased from 62 to 70.8 mph, with no precrash braking or evasive steering movement detected. (Note that the driver’s hands were not recorded as being on the steering wheel at this time.)

(The speed limit was 65 mph on this stretch of road, according to the report. So technically, the Tesla was speeding by about 10%.)

The NTSB report makes no conclusions and the NTSB continues to investigate all aspects of the accident.

Now here’s what I wrote in an earlier article describing this unfortunate accident (see “Maybe You Can’t Drive My Car. (Yet)”):

“In the case of the Tesla crash, the black box recorder data from the doomed Model X SUV shows that the vehicle knew there was some sort of a problem because it reportedly tried to get the human driver to take control for several seconds before the accident occurred. The black box data indicates that the human driver’s hands were not on the wheel for at least six seconds before the fatal crash.”

The NTSB report is consistent with the details in my earlier article, so are there any new lessons so far, prior to any conclusions the NTSB will make?

Here’s one: I think Tesla’s decision to call this self-driving feature “Autopilot” is very unfortunate. It implies that the car is perfectly capable of driving itself and this just is not true. Yet there’s proof that Mr. Huang strongly believed that the technology was reliable. He constantly took his hands off the wheel in heavy Silicon Valley traffic on Highway 85. The accident occurred at 9:27am, which is the tail end of rush hour—still plenty busy.

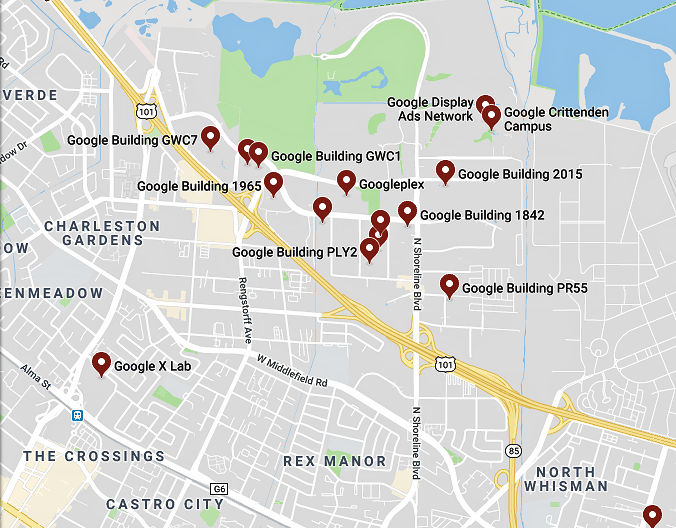

You should know that this intersection is where two major California highways, Highway 85 and US-101, converge and that the Mountain View Googleplex is just north of this intersection. Here’s a map showing the vicinity around the crash site, which is in the lower right portion of the graphic:

This is always a dangerous intersection—one to be treated with a lot of respect and caution.

In addition, the Tesla Autopilot produced three warnings in 19 minutes. The car knew and announced that it didn’t feel capable of driving in this traffic.

That tells me that we have yet to think through and solve the problem of car-to-human-driver handover—and that’s a big problem. Tesla’s Autopilot reportedly gives the driver at least two minutes of grace period between the time it asks for human driving help and the time it disengages the Autopilot. Mr. Huang’s repeated grabbing and releasing of the steering wheel suggests that it may be overly easy to spoof that two-minute period by holding the wheel for just a few seconds to reset the timeout.

A self-driving car that can be spoofed like this is a dangerous piece of technology, and yet I see no good solution to this problem. The self-driving mechanism of a car simply cannot release control of the vehicle until the human driver has retaken control, and there’s no good way to force the driver to take over. (Electric-shock electrodes in the seat cushions? I don’t think so.)

Meanwhile, Tesla continues to upgrade the self-driving features of its cars and continues to call this collection of features “Autopilot.”

Somehow, this all reminds me of the title of a book published in 1943 (before I was born) and the subsequent movie made two years later. It’s the autobiography of Brigadier General Robert Lee Scott Jr., USAF. Its title:

“God is my Copilot.”

First, from the NTSB quote above, NO “alerts were made more than 15 minutes prior to the crash.” for the driver to place his hands on the wheel, so the driver was keeping the systems satisfied.

This is confirmed by the NTSB quote above: During the 60 seconds prior to the crash, the driver’s hands were detected on the steering wheel on three separate occasions, for a total of 34 seconds; for the last 6 seconds prior to the crash, the vehicle did not detect the driver’s hands on the steering wheel.

So this is not a hand-off problem, as the vehicle was in lane and following the car ahead normally right up to the last few seconds of the trip.

The NTSB quote that follows “At 7 seconds prior to the crash, the Tesla began a left steering movement while following a lead vehicle. At 4 seconds prior to the crash, the Tesla was no longer following a lead vehicle.” is what is critical.

Count One-Thousand-One, One-Thousand-Two, One-Thousand-Three, which is how long it took the car to veer out of the lane while the car did NOT provide any feed back to assume control.

Count One-Thousand-One, One-Thousand-Two, One-Thousand-Three, One-Thousand-Four, which is how long it took the car to speed up right into the barrier and kill the driver without alerting the driver.

This was a Tesla system error, they are at fault for producing an active control product, rather than a passive product that requires the driver to actually drive, while watching for events like lane departure or getting too close and providing warnings.

Who wants to use/trust a technology that will kill you if distracted for 7 seconds?