Lidar is getting a lead role as part of a cast of many sensor technologies needed to keep automated cars and trucks from trying to occupy the same space.

For the longest time, Lidar has languished as that dreaded tool of final resort for the state trooper. It’s like radar (originating as “light radar” and back-ronymed as Light Imaging, Detection, and Ranging, available in upper- or lower-case models), except for the annoying fact that it’s only a dot. Radar conveniently fills the entire air with its signal so that you can detect its presence. Not so the lidar dot. It can be aimed at your fender in total stealth. License and registration, please…

But now, suddenly, it’s our friend. Why? Well, if cars are going to drive themselves, they have to see. Regular video cameras have a role, but not so much in the dark. Lidar (and radar) help to plumb the darkness and other low-visibility scenes. A couple of announcements recently are what make lidar our topic of the day.

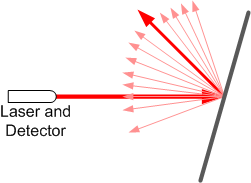

To be clear, lidar is indeed like radar, except that it uses infrared laser light. Actually, in theory, it can use any wavelength of light; it’s just that the systems we’re most interested in have chosen infrared. That light bounces off of stuff, and the reflection is detected. The timing of the reflection, as well as the characteristics of the returned light (phase and wavelength, if we’re talking coherent laser light), provide information on where stuff is and how fast it’s moving relative to the lidar.

You might object that, unless you shine the laser directly across at a surface perpendicular to the beam, the reflection will veer off in some other direction, and you won’t be able to detect the return. Which is… true. But it’s a problem only if the target is perfectly reflective (or nearly so). If not, then there’s enough scattering in the reflection that some of it can get back to the detector.

Most recently, lidar has been embodied in slightly gawky units atop cars that just scream, “Something is different about this vehicle!” Using rotating mirrors, a beam can be steered 360° around the vehicle and up and down (to some extent) to map out everything that’s within range. But there’s some thought that, if we’re serious about letting the neighbors see us inside one of those, we need to improve the aesthetics.

This came up in a presentation by MicroVision at last fall’s MEMS Executive Congress. They have a MEMS mirror setup that we saw a couple of years ago as used in their pico-projector application. They use the same basic setup for heads-up displays and for lidar (which uses one extra ASIC); they adapt to the application by changing out the laser diodes and software.

Rather than rotating 360°, their MEMS mirror waves back and forth in a sinusoidal scan, controlled by a 27-Hz resonator; individual pixels are defined by turning the laser diode on and off. The faster the scan, the faster you can get an overview of what’s nearby. By slowing the scan, you get more detail. It sounds simple in principle, but they say that they have to battle jitter in the mirror and temperature effects on the laser. Control and compensation are the tough parts.

Meanwhile, Velodyne, inventor of today’s rooftop units, has weighed in with a new technology announcement. Their current puck-shaped units are in high-volume production for so-called “on-demand” cars, or “robo-cabs” (which aren’t owned by individuals, so there are no neighbors to cast disapproving looks). Their announcement speaks of a compressed, solid-state version of this production sensor.

They’re being coy about exactly what they’re doing (they denied that it uses a diffraction grating; they didn’t deny or confirm that it uses a micro-mirror); they may reveal more later this year. They’re leveraging GaN technology in order to support a wide range of power. The various chips are co-packaged into a single system-in-package module.

So with something small like either of these, can you now take the prominent unit atop the car and make it hug close to the roof? That’s not what’s envisioned (and you can imagine that, the closer the laser gets to the actual roof of the car, the more limited its view of stuff next to the car). Instead, they’re talking about placing multiple units around the car – maybe two on each side, plus one front and one back. There’s obviously some work to do to integrate the results of each unit into a single coherent image of what’s in the vicinity, but, assuming that’s doable, this can solve the… optics problem. (If the price is right.)

One question came up at the MEMS conference regarding sensor interference. Picture all these cars rambling around, zapping each other with light beams like so many light sabers. How do you distinguish your own lidar backsplash from the light (direct or reflected) from someone else’s laser – or even other lasers on your own car? If they’re all the same frequency (within natural variations), wouldn’t this cause chaos? It’s not something we would have seen up to this point, since we don’t have large numbers of cars interfering with each other.

The immediate answer wasn’t obvious (ok, it probably would have been to someone deeply steeped in the technology, which was not us), and there was some follow-on conversation to sort it through. I came up with some mitigation strategies, but, on further reflection – pun intended – I concluded that, at least for this application and one possible interference scenario, it’s probably not a practical issue. But there may well be other mitigation approaches in play as well.

I did some internet sleuthing briefly to see if the answer was out there. The question certainly was, but all the answers I saw posted were speculative – and heck, I can do that. So this looks like a topic that deserves some attention on its own; I’ll look into it for a possible future discussion.

All of this said, there are other ways of handling lidar-like applications. Only we’ll save that for yet another future story (yes, I’m a tease), since it involves a very different type of system.

More info:

What do you think of MicroVision’s and Velodyne’s smaller lidar?

Serial beam scanning for a 2-1/2 D image with these works pretty good for large objects that can even be pretty distant … like mapping the spaces with buildings, bridges, trees, walls, fences, cars, curbs, etc. Especially when the vehicle isn’t moving.

The pixel resolution however is very poor, and doesn’t significantly aid in detecting small objects (small children for example) out at the stopping distance range the guidance system needs.

That get’s worse when the vehicle is moving (actually 6 axis bouncing around with road induced motion) so that scan lines are not well defined in the mapped image space. This effectively cuts the already poor resolution. Slowing the scan rate, just makes the motion induced jitter worse, rather than actually improving resolution.

it’s much easier to dynamically stabilize frame at a time capture devices – at least all the pixels mapped from the target image then have a fixed relationship based on the sensor geometry and known lens distortions.

Otherwise the LIDAR unit needs some significant physical stabilization … at least 3 axis, if not 6 axis to isolate vehicle motion from the sensor, for at least 3-5 frames in order to recognize small objects.

How many pixels are required to identify a child at wet/icy pavement stopping distance at 45mph? Does the sensor exceed that? Does the sensor have at least human eye pixel resolution?

Motion blur is easily eliminated with Microvision scanning tech. You might want to study the technicals before showing such doubt.

Whatever capabilities you might expect from a person will be exceeded by the machine. Human eye resolution has nothing to do with it especially since we are talking 360 degrees viewing. What human eye does that?

I bet you had a hard time buying your 1st computer.

“How do you distinguish your own lidar backsplash from the light (direct or reflected) from someone else’s laser – or even other lasers on your own car?”

Direct-Sequence Optical Code Division Multiple Access (DS-OCDMA). Google it.